D

Deleted member 180663

Guest

(My health is not at the best so i am adding the 7th one too.wish me luck.Only a few days reamins.)

Unicode, formally The Unicode Standard,is a text encoding standard maintained by the Unicode Consortium designed to support the use of text in all of the world's writing systems that can be digitized. Version 16.0 of the standard defines 154998 characters and 168 scripts used in various ordinary, literary, academic, and technical contexts.

Unicode

Without proper rendering support, you may see question marks, boxes, or other symbols.

Many common characters, including numerals, punctuation, and other symbols, are unified within the standard and are not treated as specific to any given writing system. Unicode encodes 3790 emoji, with the continued development thereof conducted by the Consortium as a part of the standard. Moreover, the widespread adoption of Unicode was in large part responsible for the initial popularization of emoji outside of Japan. Unicode is ultimately capable of encoding more than 1.1 million characters.

Unicode has largely supplanted the previous environment of a myriad of incompatible character sets, each used within different locales and on different computer architectures. Unicode is used to encode the vast majority of text on the Internet, including most web pages, and relevant Unicode support has become a common consideration in contemporary software development.

The Unicode character repertoire is synchronized with ISO/IEC 10646, each being code-for-code identical with one another. However, The Unicode Standard is more than just a repertoire within which characters are assigned. To aid developers and designers, the standard also provides charts and reference data, as well as annexes explaining concepts germane to various scripts, providing guidance for their implementation. Topics covered by these annexes include character normalization, character composition and decomposition, collation, and directionality.

Unicode text is processed and stored as binary data using one of several encodings, which define how to translate the standard's abstracted codes for characters into sequences of bytes. The Unicode Standard itself defines three encodings: UTF-8, UTF-16, and UTF-32, though several others exist. Of these, UTF-8 is the most widely used by a large margin, in part due to its backwards-compatibility with ASCII.

[See also: UTF-8 § Implementations and adoption]

Unicode, in the form of UTF-8, has been the most common encoding for the World Wide Web since 2008. It has near-universal adoption, and much of the non-UTF-8 content is found in other Unicode encodings, e.g. UTF-16. As of 2024, UTF-8 accounts for on average 98.3% of all web pages (and 983 of the top 1,000 highest-ranked web pages). Although many pages only use ASCII characters to display content, UTF-8 was designed with 8-bit ASCII as a subset and almost no websites now declare their encoding to only be ASCII instead of UTF-8. Over a third of the languages tracked have 100% UTF-8 use.

All internet protocols maintained by Internet Engineering Task Force, e.g. FTP,have required support for UTF-8 since the publication of RFC 2277 in 1998, which specified that all IETF protocols "MUST be able to use the UTF-8 charset".

Unicode has become the dominant scheme for the internal processing and storage of text. Although a great deal of text is still stored in legacy encodings, Unicode is used almost exclusively for building new information processing systems. Early adopters tended to use UCS-2 (the fixed-length two-byte obsolete precursor to UTF-16) and later moved to UTF-16 (the variable-length current standard), as this was the least disruptive way to add support for non-BMP characters. The best known such system is Windows NT (and its descendants, 2000, XP, Vista, 7, 8, 10, and 11), which uses UTF-16 as the sole internal character encoding. The Java and .NET bytecode environments, macOS, and KDE also use it for internal representation. Partial support for Unicode can be installed on Windows 9x through the Microsoft Layer for Unicode.

UTF-8 (originally developed for Plan 9)[80] has become the main storage encoding on most Unix-like operating systems (though others are also used by some libraries) because it is a relatively easy replacement for traditional extended ASCII character sets. UTF-8 is also the most common Unicode encoding used in HTML documents on the World Wide Web.

Multilingual text-rendering engines which use Unicode include Uniscribe and DirectWrite for Microsoft Windows, ATSUI and Core Text for macOS, and Pango for GTK+ and the GNOME desktop.

[Main article: Unicode input]

Because keyboard layouts cannot have simple key combinations for all characters, several operating systems provide alternative input methods that allow access to the entire repertoire.

ISO/IEC 14755,which standardises methods for entering Unicode characters from their code points, specifies several methods. There is the Basic method, where a beginning sequence is followed by the hexadecimal representation of the code point and the ending sequence. There is also a screen-selection entry method specified, where the characters are listed in a table on a screen, such as with a character map program.

Online tools for finding the code point for a known character include Unicode Lookup by Jonathan Hedley and Shapecatcher by Benjamin Milde. In Unicode Lookup, one enters a search key (e.g. "fractions"), and a list of corresponding characters with their code points is returned. In Shapecatcher, based on Shape context, one draws the character in a box and a list of characters approximating the drawing, with their code points, is returned.

MIME defines two different mechanisms for encoding non-ASCII characters in email, depending on whether the characters are in email headers (such as the "Subject:"), or in the text body of the message; in both cases, the original character set is identified as well as a transfer encoding. For email transmission of Unicode, the UTF-8 character set and the Base64 or the Quoted-printable transfer encoding are recommended, depending on whether much of the message consists of ASCII characters. The details of the two different mechanisms are specified in the MIME standards and generally are hidden from users of email software.

The IETF has defined a framework for internationalized email using UTF-8, and has updated several protocols in accordance with that framework.

The adoption of Unicode in email has been very slow.[citation needed] Some East Asian text is still encoded in encodings such as ISO-2022, and some devices, such as mobile phones,[citation needed] still cannot correctly handle Unicode data. Support has been improving, however. Many major free mail providers such as Yahoo! Mail, Gmail, and Outlook.com support it.

[Main article: Unicode and HTML]

All W3C recommendations have used Unicode as their document character set since HTML 4.0. Web browsers have supported Unicode, especially UTF-8, for many years. There used to be display problems resulting primarily from font related issues; e.g. v6 and older of Microsoft Internet Explorer did not render many code points unless explicitly told to use a font that contains them.

Although syntax rules may affect the order in which characters are allowed to appear, XML (including XHTML) documents, by definition,[91] comprise characters from most of the Unicode code points, with the exception of:

When specifying URIs, for example as URLs in HTTP requests, non-ASCII characters must be percent-encoded.

[Main article: Unicode font]

Unicode is not in principle concerned with fonts per se, seeing them as implementation choices.Any given character may have many allographs, from the more common bold, italic and base letterforms to complex decorative styles. A font is "Unicode compliant" if the glyphs in the font can be accessed using code points defined in The Unicode Standard. The standard does not specify a minimum number of characters that must be included in the font; some fonts have quite a small repertoire.

Free and retail fonts based on Unicode are widely available, since TrueType and OpenType support Unicode (and Web Open Font Format (WOFF and WOFF2) is based on those). These font formats map Unicode code points to glyphs, but OpenType and TrueType font files are restricted to 65,535 glyphs. Collection files provide a "gap mode" mechanism for overcoming this limit in a single font file. (Each font within the collection still has the 65,535 limit, however.) A TrueType Collection file would typically have a file extension of ".ttc".

Thousands of fonts exist on the market, but fewer than a dozen fonts—sometimes described as "pan-Unicode" fonts—attempt to support the majority of Unicode's character repertoire. Instead, Unicode-based fonts typically focus on supporting only basic ASCII and particular scripts or sets of characters or symbols. Several reasons justify this approach: applications and documents rarely need to render characters from more than one or two writing systems; fonts tend to demand resources in computing environments; and operating systems and applications show increasing intelligence in regard to obtaining glyph information from separate font files as needed, i.e., font substitution. Furthermore, designing a consistent set of rendering instructions for tens of thousands of glyphs constitutes a monumental task; such a venture passes the point of diminishing returns for most typefaces.

Unicode partially addresses the newline problem that occurs when trying to read a text file on different platforms. Unicode defines a large number of characters that conforming applications should recognize as line terminators.

In terms of the newline, Unicode introduced U+2028 LINE SEPARATOR and U+2029 PARAGRAPH SEPARATOR. This was an attempt to provide a Unicode solution to encoding paragraphs and lines semantically, potentially replacing all of the various platform solutions. In doing so, Unicode does provide a way around the historical platform-dependent solutions. Nonetheless, few if any Unicode solutions have adopted these Unicode line and paragraph separators as the sole canonical line ending characters. However, a common approach to solving this issue is through newline normalization. This is achieved with the Cocoa text system in macOS and also with W3C XML and HTML recommendations. In this approach, every possible newline character is converted internally to a common newline (which one does not really matter since it is an internal operation just for rendering). In other words, the text system can correctly treat the character as a newline, regardless of the input's actual encoding.

For a higher-level list of entire blocks rather than individuals, see Unicode block.

As of Unicode version 16.0, there are 155,063 characters with code points, covering 168 modern and historical scripts, as multiple symbol sets. This includes the 1,062 characters in the Multilingual European Character Set 2 (MES-2) subset, and some additional related characters.

[See also: List of XML and HTML character entity references and Unicode input]

[TD] North Indic

South Indic

Ethiopic

Thaana

Canadian syllabics[/TD]

[/TD]

[TR]

[TD]a Featural-alphabetic. b Limited.[/TD]

[/TR]

HTML and XML provide ways to reference Unicode characters when the characters themselves either cannot or should not be used. A numeric character reference refers to a character by its Universal Character Set/Unicode code point, and a character entity reference refers to a character by a predefined name.

A numeric character reference uses the format

&#nnnn;

or

&#xhhhh;

where nnnn is the code point in decimal form, and hhhh is the code point in hexadecimal form. The x must be lowercase in XML documents. The nnnn or hhhh may be any number of digits and may include leading zeros. The hhhh may mix uppercase and lowercase, though uppercase is the usual style.

In contrast, a character entity reference refers to a character by the name of an entity which has the desired character as its replacement text. The entity must either be predefined (built into the markup language) or explicitly declared in a Document Type Definition (DTD). The format is the same as for any entity reference:

&name;

where name is the case-sensitive name of the entity. The semicolon is required.

Because numbers are harder for humans to remember than names, character entity references are most often written by humans, while numeric character references are most often produced by computer programs.

Main articles: Unicode control characters and C0 and C1 control codes

See also: ASCII § ASCII control characters, and Control Pictures

65 characters, including DEL. All belong to the common script.

Footnotes:

1 Control-C has typically been used as a "break" or "interrupt" key.2 Control-D has been used to signal "end of file" for text typed in at the terminal on Unix / Linux systems. Windows, DOS, and older minicomputers used Control-Z for this purpose.3 Control-G is an artifact of the days when teletypes were in use. Important messages could be signalled by striking the bell on the teletype. This was carried over on PCs by generating a buzz sound.4 Line feed is used for "end of line" in text files on Unix / Linux systems.5 Carriage Return (accompanied by line feed) is used as "end of line" character by Windows, DOS, and most minicomputers other than Unix- / Linux-based systems6 Control-O has been the "discard output" key. Output is not sent to the terminal, but discarded, until another Control-o is typed.7 Control-Q has been used to tell a host computer to resume sending output after it was stopped by Control-S.8 Control-S has been used to tell a host computer to postpone sending output to the terminal. Output is suspended until restarted by the Control-Q key.9 Control-U was originally used by Digital Equipment Corporation computers to cancel the current line of typed-in text. Other manufacturers used Control-X for this purpose.10 Control-X was commonly used to cancel a line of input typed in at the terminal.11 Control-Z has commonly been used on minicomputers, Windows and DOS systems to indicate "end of file" either on a terminal or in a text file. Unix / Linux systems use Control-D to indicate end-of-file at a terminal.

If you want to check out the rest:List of unicode characters

There are still sevral more. As in numbers/symbols/font/text(in different languages/script/codes/letters.

Check out this as well:

List of them

The Paranal Observatory of European Southern Observatory shooting a laser guide star to the Galactic Center

The Paranal Observatory of European Southern Observatory shooting a laser guide star to the Galactic Center

Astronomy is one of the oldest natural sciences. The early civilizations in recorded history made methodical observations of the night sky. These include the Egyptians, Babylonians, Greeks, Indians, Chinese, Maya, and many ancient indigenous peoples of the Americas. In the past, astronomy included disciplines as diverse as astrometry, celestial navigation, observational astronomy, and the making of calendars.

Professional astronomy is split into observational and theoretical branches. Observational astronomy is focused on acquiring data from observations of astronomical objects. This data is then analyzed using basic principles of physics. Theoretical astronomy is oriented toward the development of computer or analytical models to describe astronomical objects and phenomena. These two fields complement each other. Theoretical astronomy seeks to explain observational results and observations are used to confirm theoretical results.

Astronomy is one of the few sciences in which amateurs play an active role. This is especially true for the discovery and observation of transient events. Amateur astronomers have helped with many important discoveries, such as finding new comets.

Overview of types of observational astronomy by observed wavelengths and their observability

Overview of types of observational astronomy by observed wavelengths and their observability

The main source of information about celestial bodies and other objects is visible light, or more generally electromagnetic radiation.[51] Observational astronomy may be categorized according to the corresponding region of the electromagnetic spectrum on which the observations are made. Some parts of the spectrum can be observed from the Earth's surface, while other parts are only observable from either high altitudes or outside the Earth's atmosphere. Specific information on these subfields is given below.

The Very Large Array in New Mexico, an example of a radio telescope

[Main article: Radio astronomy]

Radio astronomy uses radiation with wavelengths greater than approximately one millimeter, outside the visible range.[52] Radio astronomy is different from most other forms of observational astronomy in that the observed radio waves can be treated as waves rather than as discrete photons. Hence, it is relatively easier to measure both the amplitude and phase of radio waves, whereas this is not as easily done at shorter wavelengths.[52]

Although some radio waves are emitted directly by astronomical objects, a product of thermal emission, most of the radio emission that is observed is the result of synchrotron radiation, which is produced when electrons orbit magnetic fields.[52] Additionally, a number of spectral lines produced by interstellar gas, notably the hydrogen spectral line at 21 cm, are observable at radio wavelengths.[8][52]

A wide variety of other objects are observable at radio wavelengths, including supernovae, interstellar gas, pulsars, and active galactic nuclei.[8][52]

ALMA Observatory is one of the highest observatory sites on Earth. Atacama, Chile.[53]

[Main article: Infrared astronomy]

Infrared astronomy is founded on the detection and analysis of infrared radiation, wavelengths longer than red light and outside the range of our vision. The infrared spectrum is useful for studying objects that are too cold to radiate visible light, such as planets, circumstellar disks or nebulae whose light is blocked by dust. The longer wavelengths of infrared can penetrate clouds of dust that block visible light, allowing the observation of young stars embedded in molecular clouds and the cores of galaxies. Observations from the Wide-field Infrared Survey Explorer (WISE) have been particularly effective at unveiling numerous galactic protostars and their host star clusters.[54][55] With the exception of infrared wavelengths close to visible light, such radiation is heavily absorbed by the atmosphere, or masked, as the atmosphere itself produces significant infrared emission. Consequently, infrared observatories have to be located in high, dry places on Earth or in space.[56] Some molecules radiate strongly in the infrared. This allows the study of the chemistry of space; more specifically it can detect water in comets.[57]

The Subaru Telescope (left) and Keck Observatory (center) on Mauna Kea, both examples of an observatory that operates at near-infrared and visible wavelengths. The NASA Infrared Telescope Facility(right) is an example of a telescope that operates only at near-infrared wavelengths.

Main article: Optical astronomy

Historically, optical astronomy, which has been also called visible light astronomy, is the oldest form of astronomy.[58] Images of observations were originally drawn by hand. In the late 19th century and most of the 20th century, images were made using photographic equipment. Modern images are made using digital detectors, particularly using charge-coupled devices (CCDs) and recorded on modern medium. Although visible light itself extends from approximately 4000 Å to 7000 Å (400 nm to 700 nm),[58] that same equipment can be used to observe some near-ultraviolet and near-infrared radiation.

Ultraviolet astronomy employs ultraviolet wavelengths between approximately 100 and 3200 Å (10 to 320 nm).[52] Light at those wavelengths is absorbed by the Earth's atmosphere, requiring observations at these wavelengths to be performed from the upper atmosphere or from space. Ultraviolet astronomy is best suited to the study of thermal radiation and spectral emission lines from hot blue stars (OB stars) that are very bright in this wave band. This includes the blue stars in other galaxies, which have been the targets of several ultraviolet surveys. Other objects commonly observed in ultraviolet light include planetary nebulae, supernova remnants, and active galactic nuclei.[52] However, as ultraviolet light is easily absorbed by interstellar dust, an adjustment of ultraviolet measurements is necessary.[52]

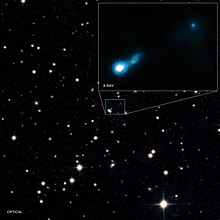

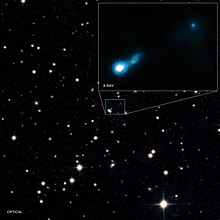

X-ray jet made from a supermassive black hole found by NASA's Chandra X-ray Observatory, made visible by light from the early Universe

X-ray astronomy uses X-ray wavelengths. Typically, X-ray radiation is produced by synchrotron emission (the result of electrons orbiting magnetic field lines), thermal emission from thin gases above 107 (10 million) kelvins, and thermal emission from thick gases above 107 Kelvin.[52] Since X-rays are absorbed by the Earth's atmosphere, all X-ray observations must be performed from high-altitude balloons, rockets, or X-ray astronomy satellites. Notable X-ray sources include X-ray binaries, pulsars, supernova remnants, elliptical galaxies, clusters of galaxies, and active galactic nuclei.[52]

Gamma ray astronomy observes astronomical objects at the shortest wavelengths of the electromagnetic spectrum. Gamma rays may be observed directly by satellites such as the Compton Gamma Ray Observatory or by specialized telescopes called atmospheric Cherenkov telescopes.[52] The Cherenkov telescopes do not detect the gamma rays directly but instead detect the flashes of visible light produced when gamma rays are absorbed by the Earth's atmosphere.[59]

Most gamma-ray emitting sources are actually gamma-ray bursts, objects which only produce gamma radiation for a few milliseconds to thousands of seconds before fading away. Only 10% of gamma-ray sources are non-transient sources. These steady gamma-ray emitters include pulsars, neutron stars, and black hole candidates such as active galactic nuclei.[52]

In neutrino astronomy, astronomers use heavily shielded underground facilities such as SAGE, GALLEX, and Kamioka II/III for the detection of neutrinos. The vast majority of the neutrinos streaming through the Earth originate from the Sun, but 24 neutrinos were also detected from supernova 1987A.[52] Cosmic rays, which consist of very high energy particles (atomic nuclei) that can decay or be absorbed when they enter the Earth's atmosphere, result in a cascade of secondary particles which can be detected by current observatories.[60] Some future neutrino detectors may also be sensitive to the particles produced when cosmic rays hit the Earth's atmosphere.[52]

Gravitational-wave astronomy is an emerging field of astronomy that employs gravitational-wave detectors to collect observational data about distant massive objects. A few observatories have been constructed, such as the Laser Interferometer Gravitational Observatory LIGO. LIGO made its first detection on 14 September 2015, observing gravitational waves from a binary black hole.[61] A second gravitational wave was detected on 26 December 2015 and additional observations should continue but gravitational waves require extremely sensitive instruments.[62][63]

The combination of observations made using electromagnetic radiation, neutrinos or gravitational waves and other complementary information, is known as multi-messenger astronomy.[64][65]

Star cluster Pismis 24 with a nebula

Star cluster Pismis 24 with a nebula

One of the oldest fields in astronomy, and in all of science, is the measurement of the positions of celestial objects. Historically, accurate knowledge of the positions of the Sun, Moon, planets and stars has been essential in celestial navigation (the use of celestial objects to guide navigation) and in the making of calendars.[66]: 39

Careful measurement of the positions of the planets has led to a solid understanding of gravitational perturbations, and an ability to determine past and future positions of the planets with great accuracy, a field known as celestial mechanics. More recently the tracking of near-Earth objects will allow for predictions of close encounters or potential collisions of the Earth with those objects.[67]

The measurement of stellar parallax of nearby stars provides a fundamental baseline in the cosmic distance ladder that is used to measure the scale of the Universe. Parallax measurements of nearby stars provide an absolute baseline for the properties of more distant stars, as their properties can be compared. Measurements of the radial velocity and proper motion of stars allow astronomers to plot the movement of these systems through the Milky Way galaxy. Astrometric results are the basis used to calculate the distribution of speculated dark matter in the galaxy.[68]

During the 1990s, the measurement of the stellar wobble of nearby stars was used to detect large extrasolar planets orbiting those stars.[69]

Theoretical astronomers use several tools including analytical models and computational numerical simulations; each has its particular advantages. Analytical models of a process are better for giving broader insight into the heart of what is going on. Numerical models reveal the existence of phenomena and effects otherwise unobserved.[70][71]

Theorists in astronomy endeavor to create theoretical models that are based on existing observations and known physics, and to predict observational consequences of those models. The observation of phenomena predicted by a model allows astronomers to select between several alternative or conflicting models. Theorists also modify existing models to take into account new observations. In some cases, a large amount of observational data that is inconsistent with a model may lead to abandoning it largely or completely, as for geocentric theory, the existence of luminiferous aether, and the steady-state model of cosmic evolution.

Phenomena modeled by theoretical astronomers include:

In the 21st century there remain important unanswered questions in astronomy. Some are cosmic in scope: for example, what are dark matter and dark energy? These dominate the evolution and fate of the cosmos, yet their true nature remains unknown.[127] What will be the ultimate fate of the universe?[128] Why is the abundance of lithium in the cosmos four times lower than predicted by the standard Big Bang model?[129] Others pertain to more specific classes of phenomena. For example, is the Solar System normal or atypical?[130] What is the origin of the stellar mass spectrum? That is, why do astronomers observe the same distribution of stellar masses—the initial mass function—apparently regardless of the initial conditions?[131] Likewise, questions remain about the formation of the first galaxies,[132] the origin of supermassive black holes,[133] the source of ultra-high-energy cosmic rays,[134] and more.

Is there other life in the Universe? Especially, is there other intelligent life? If so, what is the explanation for the Fermi paradox? The existence of life elsewhere has important scientific and philosophical implications.[135][136]

The study of chemicals found in space, including their formation, interaction and destruction, is called astrochemistry. These substances are usually found in molecular clouds, although they may also appear in low-temperature stars, brown dwarfs and planets. Cosmochemistry is the study of the chemicals found within the Solar System, including the origins of the elements and variations in the isotope ratios. Both of these fields represent an overlap of the disciplines of astronomy and chemistry. As "forensic astronomy", finally, methods from astronomy have been used to solve problems of art history[116][117] and occasionally of law.[118]

.

Cosmology (from Ancient Greek κόσμος (cosmos) 'the universe, the world' and λογία (logia) 'study of') is a branch of physics and metaphysics dealing with the nature of the universe, the cosmos. The term cosmology was first used in English in 1656 in Thomas Blount's Glossographia,[2] and in 1731 taken up in Latin by German philosopher Christian Wolff in Cosmologia Generalis.[3] Religious or mythological cosmology is a body of beliefs based on mythological, religious, and esoteric literature and traditions of creation myths and eschatology. In the science of astronomy, cosmology is concerned with the study of the chronology of the universe.

The Hubble eXtreme Deep Field (XDF) was completed in September 2012 and shows the farthest galaxies ever photographed at that time. Except for the few stars in the foreground (which are bright and easily recognizable because only they have diffraction spikes), every speck of light in the photo is an individual galaxy, some of them as old as 13.2 billion years; the observable universe is estimated to contain more than 2 trillion galaxies.[1]

The Hubble eXtreme Deep Field (XDF) was completed in September 2012 and shows the farthest galaxies ever photographed at that time. Except for the few stars in the foreground (which are bright and easily recognizable because only they have diffraction spikes), every speck of light in the photo is an individual galaxy, some of them as old as 13.2 billion years; the observable universe is estimated to contain more than 2 trillion galaxies.[1]

Physical cosmology is the study of the observable universe's origin, its large-scale structures and dynamics, and the ultimate fate of the universe, including the laws of science that govern these areas.[4] It is investigated by scientists, including astronomers and physicists, as well as philosophers, such as metaphysicians, philosophers of physics, and philosophers of space and time. Because of this shared scope with philosophy, theories in physical cosmology may include both scientific and non-scientific propositions and may depend upon assumptions that cannot be tested. Physical cosmology is a sub-branch of astronomy that is concerned with the universe as a whole. Modern physical cosmology is dominated by the Big Bang Theory which attempts to bring together observational astronomy and particle physics;[5][6] more specifically, a standard parameterization of the Big Bang with dark matter and dark energy, known as the Lambda-CDM model.

Theoretical astrophysicist David N. Spergel has described cosmology as a "historical science" because "when we look out in space, we look back in time" due to the finite nature of the speed of light.[7]

Physics and astrophysics have played central roles in shaping our understanding of the universe through scientific observation and experiment. Physical cosmology was shaped through both mathematics and observation in an analysis of the whole universe. The universe is generally understood to have begun with the Big Bang, followed almost instantaneously by cosmic inflation, an expansion of space from which the universe is thought to have emerged 13.799 ± 0.021 billion years ago.[8] Cosmogony studies the origin of the universe, and cosmography maps the features of the universe.

In Diderot's Encyclopédie, cosmology is broken down into uranology (the science of the heavens), aerology (the science of the air), geology (the science of the continents), and hydrology (the science of waters).[9]

Metaphysical cosmology has also been described as the placing of humans in the universe in relationship to all other entities. This is exemplified by Marcus Aurelius's observation that a man's place in that relationship: "He who does not know what the world is does not know where he is, and he who does not know for what purpose the world exists, does not know who he is, nor what the world is."[10]

[Main article: Physical cosmology]

See also: Observational cosmology

Physical cosmology is the branch of physics and astrophysics that deals with the study of the physical origins and evolution of the universe. It also includes the study of the nature of the universe on a large scale. In its earliest form, it was what is now known as "celestial mechanics," the study of the heavens. Greek philosophers Aristarchus of Samos, Aristotle, and Ptolemy proposed different cosmological theories. The geocentric Ptolemaic system was the prevailing theory until the 16th century when Nicolaus Copernicus, and subsequently Johannes Kepler and Galileo Galilei, proposed a heliocentric system. This is one of the most famous examples of epistemological rupture in physical cosmology.

Isaac Newton's Principia Mathematica, published in 1687, was the first description of the law of universal gravitation. It provided a physical mechanism for Kepler's laws and also allowed the anomalies in previous systems, caused by gravitational interaction between the planets, to be resolved. A fundamental difference between Newton's cosmology and those preceding it was the Copernican principle—that the bodies on Earth obey the same physical laws as all celestial bodies. This was a crucial philosophical advance in physical cosmology.

Modern scientific cosmology is widely considered to have begun in 1917 with Albert Einstein's publication of his final modification of general relativity in the paper "Cosmological Considerations of the General Theory of Relativity"[11] (although this paper was not widely available outside of Germany until the end of World War I). General relativity prompted cosmogonists such as Willem de Sitter, Karl Schwarzschild, and Arthur Eddington to explore its astronomical ramifications, which enhanced the ability of astronomers to study very distant objects. Physicists began changing the assumption that the universe was static and unchanging. In 1922, Alexander Friedmann introduced the idea of an expanding universe that contained moving matter.

In parallel to this dynamic approach to cosmology, one long-standing debate about the structure of the cosmos was coming to a climax – the Great Debate (1917 to 1922) – with early cosmologists such as Heber Curtis and Ernst Öpik determining that some nebulae seen in telescopes were separate galaxies far distant from our own.[12] While Heber Curtis argued for the idea that spiral nebulae were star systems in their own right as island universes, Mount Wilson astronomer Harlow Shapley championed the model of a cosmos made up of the Milky Way star system only. This difference of ideas came to a climax with the organization of the Great Debate on 26 April 1920 at the meeting of the U.S. National Academy of Sciences in Washington, D.C. The debate was resolved when Edwin Hubble detected Cepheid Variables in the Andromeda Galaxy in 1923 and 1924.[13][14] Their distance established spiral nebulae well beyond the edge of the Milky Way.

Subsequent modelling of the universe explored the possibility that the cosmological constant, introduced by Einstein in his 1917 paper, may result in an expanding universe, depending on its value. Thus the Big Bang model was proposed by the Belgian priest Georges Lemaître in 1927[15] which was subsequently corroborated by Edwin Hubble's discovery of the redshift in 1929[16] and later by the discovery of the cosmic microwave background radiation by Arno Penzias and Robert Woodrow Wilson in 1964.[17] These findings were a first step to rule out some of many alternative cosmologies.

Since around 1990, several dramatic advances in observational cosmology have transformed cosmology from a largely speculative science into a predictive science with precise agreement between theory and observation. These advances include observations of the microwave background from the COBE,[18] WMAP[19] and Planck satellites,[20] large new galaxy redshift surveys including 2dfGRS[21] and SDSS,[22] and observations of distant supernovae and gravitational lensing. These observations matched the predictions of the cosmic inflation theory, a modified Big Bang theory, and the specific version known as the Lambda-CDM model. This has led many to refer to modern times as the "golden age of cosmology".[23]

In 2014, the BICEP2 collaboration claimed that they had detected the imprint of gravitational waves in the cosmic microwave background. However, this result was later found to be spurious: the supposed evidence of gravitational waves was in fact due to interstellar dust.[24][25]

On 1 December 2014, at the Planck 2014 meeting in Ferrara, Italy, astronomers reported that the universe is 13.8 billion years old and composed of 4.9% atomic matter, 26.6% dark matter and 68.5% dark energy.[26]

See also: Religious cosmology

Religious or mythological cosmology is a body of beliefs based on mythological, religious, and esoteric literature and traditions of creation and eschatology. Creation myths are found in most religions, and are typically split into five different classifications, based on a system created by Mircea Eliade and his colleague Charles Long.

Representation of the observable universe on a logarithmic scale. Distance from the Sun increases from center to edge. Planets and other celestial bodies were enlarged to appreciate their shapes.

Representation of the observable universe on a logarithmic scale. Distance from the Sun increases from center to edge. Planets and other celestial bodies were enlarged to appreciate their shapes.

Cosmology deals with the world as the totality of space, time and all phenomena. Historically, it has had quite a broad scope, and in many cases was found in religion.[28] Some questions about the Universe are beyond the scope of scientific inquiry but may still be interrogated through appeals to other philosophical approaches like dialectics. Some questions that are included in extra-scientific endeavors may include:[29][30] Charles Kahn, an important historian of philosophy, attributed the origins of ancient Greek cosmology to Anaximander.[31]

Not to be confused with Blazer or Blazor.

A blazar is an active galactic nucleus (AGN) with a relativistic jet (a jet composed of ionized matter traveling at nearly the speed of light) directed very nearly towards an observer. Relativistic beaming of electromagnetic radiation from the jet makes blazars appear much brighter than they would be if the jet were pointed in a direction away from Earth.[1] Blazars are powerful sources of emission across the electromagnetic spectrum and are observed to be sources of high-energy gamma ray photons. Blazars are highly variable sources, often undergoing rapid and dramatic fluctuations in brightness on short timescales (hours to days). Some blazar jets appear to exhibit superluminal motion, another consequence of material in the jet traveling toward the observer at nearly the speed of light.

The elliptical galaxy M87 emitting a relativistic jet, as seen by the Hubble Space Telescope. An active galaxy is classified as a blazar when its jet is pointing close to the line of sight. In the case of M87, because the angle between the jet and the line of sight is not small, its nucleus is not classified as a blazar, but rather as radio galaxy.

The elliptical galaxy M87 emitting a relativistic jet, as seen by the Hubble Space Telescope. An active galaxy is classified as a blazar when its jet is pointing close to the line of sight. In the case of M87, because the angle between the jet and the line of sight is not small, its nucleus is not classified as a blazar, but rather as radio galaxy.

The blazar category includes BL Lac objects and optically violently variable (OVV) quasars. The generally accepted theory is that BL Lac objects are intrinsically low-power radio galaxies while OVV quasars are intrinsically powerful radio-loud quasars. The name "blazar" was coined in 1978 by astronomer Edward Spiegel to denote the combination of these two classes.[2]

In visible-wavelength images, most blazars appear compact and pointlike, but high-resolution images reveal that they are located at the centers of elliptical galaxies.[3]

Blazars are important topics of research in astronomy and high-energy astrophysics. Blazar research includes investigation of the properties of accretion disks and jets, the central supermassive black holes and surrounding host galaxies, and the emission of high-energy photons, cosmic rays, and neutrinos.

In July 2018, the IceCube Neutrino Observatory team traced a neutrino that hit its Antarctica-based detector in September 2017 to its point of origin in a blazar 3.7 billion light-years away. This was the first time that a neutrino detector was used to locate an object in space.[4][5][6]

Sloan Digital Sky Survey image of blazar Markarian 421, illustrating the bright nucleus and elliptical host galaxy

Sloan Digital Sky Survey image of blazar Markarian 421, illustrating the bright nucleus and elliptical host galaxy

Blazars, like all active galactic nuclei (AGN), are thought to be powered by material falling into a supermassive black hole in the core of the host galaxy. Gas, dust and the occasional star are captured and spiral into this central black hole, creating a hot accretion disk which generates enormous amounts of energy in the form of photons, electrons, positrons and other elementary particles. This region is relatively small, approximately 10−3 parsecs in size.

There is also a larger opaque toroid extending several parsecs from the black hole, containing a hot gas with embedded regions of higher density. These "clouds" can absorb and re-emit energy from regions closer to the black hole. On Earth, the clouds are detected as emission lines in the blazar spectrum.

Perpendicular to the accretion disk, a pair of relativistic jets carries highly energetic plasma away from the AGN. The jet is collimated by a combination of intense magnetic fields and powerful winds from the accretion disk and toroid. Inside the jet, high energy photons and particles interact with each other and the strong magnetic field. These relativistic jets can extend as far as many tens of kiloparsecs from the central black hole.

All of these regions can produce a variety of observed energy, mostly in the form of a nonthermal spectrum ranging from very low-frequency radio to extremely energetic gamma rays, with a high polarization (typically a few percent) at some frequencies. The nonthermal spectrum consists of synchrotron radiation in the radio to X-ray range, and inverse Compton emission in the X-ray to gamma-ray region. A thermal spectrum peaking in the ultraviolet region and faint optical emission lines are also present in OVV quasars, but faint or non-existent in BL Lac objects.

The observed emission from a blazar is greatly enhanced by relativistic effects in the jet, a process called relativistic beaming. The bulk speed of the plasma that constitutes the jet can be in the range of 95%–99% of the speed of light, although individual particles move at higher speeds in various directions.

The relationship between the luminosity emitted in the rest frame of the jet and the luminosity observed from Earth depends on the characteristics of the jet. These include whether the luminosity arises from a shock front or a series of brighter blobs in the jet, as well as details of the magnetic fields within the jet and their interaction with the moving particles.

A simple model of beaming illustrates the basic relativistic effects connecting the luminosity in the rest frame of the jet, Se, and the luminosity observed on Earth, So: So is proportional to Se × D2, where D is the doppler factor.

When considered in much more detail, three relativistic effects are involved:

Consider a jet with an angle to the line of sight θ = 5° and a speed of 99.9% of the speed of light. The luminosity observed from Earth is 70 times greater than the emitted luminosity. However, if θ is at the minimum value of 0° the jet will appear 600 times brighter from Earth.

Relativistic beaming also has another critical consequence. The jet which is not approaching Earth will appear dimmer because of the same relativistic effects. Therefore, two intrinsically identical jets will appear significantly asymmetric. In the example given above any jet where θ > 35° will be observed on Earth as less luminous than it would be from the rest frame of the jet.

A further consequence is that a population of intrinsically identical AGN scattered in space with random jet orientations will look like a very inhomogeneous population on Earth. The few objects where θ is small will have one very bright jet, while the rest will apparently have considerably weaker jets. Those where θ varies from 90° will appear to have asymmetric jets.

This is the essence behind the connection between blazars and radio galaxies. AGN which have jets oriented close to the line of sight with Earth can appear extremely different from other AGN even if they are intrinsically identical.

Many of the brighter blazars were first identified, not as powerful distant galaxies, but as irregular variable stars in our own galaxy. These blazars, like genuine irregular variable stars, changed in brightness on periods of days or years, but with no pattern.

The early development of radio astronomy had shown that there are many bright radio sources in the sky. By the end of the 1950s, the resolution of radio telescopes was sufficient to identify specific radio sources with optical counterparts, leading to the discovery of quasars. Blazars were highly represented among these early quasars, and the first redshift was found for 3C 273, a highly variable quasar which is also a blazar.

In 1968, a similar connection was made between the "variable star" BL Lacertae and a powerful radio source VRO 42.22.01.[7] BL Lacertae shows many of the characteristics of quasars, but the optical spectrum was devoid of the spectral lines used to determine redshift. Faint indications of an underlying galaxy—proof that BL Lacertae was not a star—were found in 1974.

The extragalactic nature of BL Lacertae was not a surprise. In 1972 a few variable optical and radio sources were grouped together and proposed as a new class of galaxy: BL Lacertae-type objects. This terminology was soon shortened to "BL Lacertae object", "BL Lac object" or simply "BL Lac". (The latter term can also mean the original individual blazar and not the entire class.)

As of 2003, a few hundred BL Lac objects were known. One of the closest blazars is 2.5 billion light years away.[8][9]

Blazars are thought to be active galactic nuclei, with relativistic jets oriented close to the line of sight with the observer.

The special jet orientation explains the general peculiar characteristics: high observed luminosity, very rapid variation, high polarization (compared to non-blazar quasars), and the apparent superluminal motions detected along the first few parsecs of the jets in most blazars.

A Unified Scheme or Unified Model has become generally accepted, where highly variable quasars are related to intrinsically powerful radio galaxies, and BL Lac objects are related to intrinsically weak radio galaxies.[10] The distinction between these two connected populations explains the difference in emission line properties in blazars.[11]

Other explanations for the relativistic jet/unified scheme approach which have been proposed include gravitational microlensing and coherent emission from the relativistic jet. Neither of these explains the overall properties of blazars. For example, microlensing is achromatic. That is, all parts of a spectrum would rise and fall together. This is not observed in blazars. However, it is possible that these processes, as well as more complex plasma physics, can account for specific observations or some details.

Examples of blazars include 3C 454.3, 3C 273, BL Lacertae, PKS 2155-304, Markarian 421, Markarian 501, 4C +71.07, PKS 0537-286 (QSO 0537-286) and S5 0014+81. Markarian 501 and S5 0014+81 are also called "TeV Blazars" for their high energy (teraelectron-volt range) gamma-ray emission.

In July 2018, a blazar called TXS 0506+056[12] was identified as source of high-energy neutrinos by the IceCube project.[5][6][13]

Ok thats it. I also wanted ato add plusars, blazaras, supernovas and all that other stuff.

But listen i am not that free.

If you have any questions, then keep them to yourself.

See ya.

6-Unicode:

Unicode, formally The Unicode Standard,is a text encoding standard maintained by the Unicode Consortium designed to support the use of text in all of the world's writing systems that can be digitized. Version 16.0 of the standard defines 154998 characters and 168 scripts used in various ordinary, literary, academic, and technical contexts.

Unicode

Logo of the Unicode Consortium | |

| Alias(es) |

|

|---|---|

| Language(s) | 168 scripts (list) |

| Standard | Unicode Standard |

| Encoding formats |

(obsolete) |

| Preceded by | ISO/IEC 8859, among others. |

Without proper rendering support, you may see question marks, boxes, or other symbols.

Many common characters, including numerals, punctuation, and other symbols, are unified within the standard and are not treated as specific to any given writing system. Unicode encodes 3790 emoji, with the continued development thereof conducted by the Consortium as a part of the standard. Moreover, the widespread adoption of Unicode was in large part responsible for the initial popularization of emoji outside of Japan. Unicode is ultimately capable of encoding more than 1.1 million characters.

Unicode has largely supplanted the previous environment of a myriad of incompatible character sets, each used within different locales and on different computer architectures. Unicode is used to encode the vast majority of text on the Internet, including most web pages, and relevant Unicode support has become a common consideration in contemporary software development.

The Unicode character repertoire is synchronized with ISO/IEC 10646, each being code-for-code identical with one another. However, The Unicode Standard is more than just a repertoire within which characters are assigned. To aid developers and designers, the standard also provides charts and reference data, as well as annexes explaining concepts germane to various scripts, providing guidance for their implementation. Topics covered by these annexes include character normalization, character composition and decomposition, collation, and directionality.

Unicode text is processed and stored as binary data using one of several encodings, which define how to translate the standard's abstracted codes for characters into sequences of bytes. The Unicode Standard itself defines three encodings: UTF-8, UTF-16, and UTF-32, though several others exist. Of these, UTF-8 is the most widely used by a large margin, in part due to its backwards-compatibility with ASCII.

Adoption:

[See also: UTF-8 § Implementations and adoption]

Unicode, in the form of UTF-8, has been the most common encoding for the World Wide Web since 2008. It has near-universal adoption, and much of the non-UTF-8 content is found in other Unicode encodings, e.g. UTF-16. As of 2024, UTF-8 accounts for on average 98.3% of all web pages (and 983 of the top 1,000 highest-ranked web pages). Although many pages only use ASCII characters to display content, UTF-8 was designed with 8-bit ASCII as a subset and almost no websites now declare their encoding to only be ASCII instead of UTF-8. Over a third of the languages tracked have 100% UTF-8 use.

All internet protocols maintained by Internet Engineering Task Force, e.g. FTP,have required support for UTF-8 since the publication of RFC 2277 in 1998, which specified that all IETF protocols "MUST be able to use the UTF-8 charset".

Operating systems

Unicode has become the dominant scheme for the internal processing and storage of text. Although a great deal of text is still stored in legacy encodings, Unicode is used almost exclusively for building new information processing systems. Early adopters tended to use UCS-2 (the fixed-length two-byte obsolete precursor to UTF-16) and later moved to UTF-16 (the variable-length current standard), as this was the least disruptive way to add support for non-BMP characters. The best known such system is Windows NT (and its descendants, 2000, XP, Vista, 7, 8, 10, and 11), which uses UTF-16 as the sole internal character encoding. The Java and .NET bytecode environments, macOS, and KDE also use it for internal representation. Partial support for Unicode can be installed on Windows 9x through the Microsoft Layer for Unicode.

UTF-8 (originally developed for Plan 9)[80] has become the main storage encoding on most Unix-like operating systems (though others are also used by some libraries) because it is a relatively easy replacement for traditional extended ASCII character sets. UTF-8 is also the most common Unicode encoding used in HTML documents on the World Wide Web.

Multilingual text-rendering engines which use Unicode include Uniscribe and DirectWrite for Microsoft Windows, ATSUI and Core Text for macOS, and Pango for GTK+ and the GNOME desktop.

Input methods

[Main article: Unicode input]

Because keyboard layouts cannot have simple key combinations for all characters, several operating systems provide alternative input methods that allow access to the entire repertoire.

ISO/IEC 14755,which standardises methods for entering Unicode characters from their code points, specifies several methods. There is the Basic method, where a beginning sequence is followed by the hexadecimal representation of the code point and the ending sequence. There is also a screen-selection entry method specified, where the characters are listed in a table on a screen, such as with a character map program.

Online tools for finding the code point for a known character include Unicode Lookup by Jonathan Hedley and Shapecatcher by Benjamin Milde. In Unicode Lookup, one enters a search key (e.g. "fractions"), and a list of corresponding characters with their code points is returned. In Shapecatcher, based on Shape context, one draws the character in a box and a list of characters approximating the drawing, with their code points, is returned.

Email

[Main article: Unicode and email]MIME defines two different mechanisms for encoding non-ASCII characters in email, depending on whether the characters are in email headers (such as the "Subject:"), or in the text body of the message; in both cases, the original character set is identified as well as a transfer encoding. For email transmission of Unicode, the UTF-8 character set and the Base64 or the Quoted-printable transfer encoding are recommended, depending on whether much of the message consists of ASCII characters. The details of the two different mechanisms are specified in the MIME standards and generally are hidden from users of email software.

The IETF has defined a framework for internationalized email using UTF-8, and has updated several protocols in accordance with that framework.

The adoption of Unicode in email has been very slow.[citation needed] Some East Asian text is still encoded in encodings such as ISO-2022, and some devices, such as mobile phones,[citation needed] still cannot correctly handle Unicode data. Support has been improving, however. Many major free mail providers such as Yahoo! Mail, Gmail, and Outlook.com support it.

Web

[Main article: Unicode and HTML]

All W3C recommendations have used Unicode as their document character set since HTML 4.0. Web browsers have supported Unicode, especially UTF-8, for many years. There used to be display problems resulting primarily from font related issues; e.g. v6 and older of Microsoft Internet Explorer did not render many code points unless explicitly told to use a font that contains them.

Although syntax rules may affect the order in which characters are allowed to appear, XML (including XHTML) documents, by definition,[91] comprise characters from most of the Unicode code points, with the exception of:

- FFFE or FFFF.

- most of the C0 control codes,

- the permanently unassigned code points D800–DFFF,

When specifying URIs, for example as URLs in HTTP requests, non-ASCII characters must be percent-encoded.

Fonts

[Main article: Unicode font]

Unicode is not in principle concerned with fonts per se, seeing them as implementation choices.Any given character may have many allographs, from the more common bold, italic and base letterforms to complex decorative styles. A font is "Unicode compliant" if the glyphs in the font can be accessed using code points defined in The Unicode Standard. The standard does not specify a minimum number of characters that must be included in the font; some fonts have quite a small repertoire.

Free and retail fonts based on Unicode are widely available, since TrueType and OpenType support Unicode (and Web Open Font Format (WOFF and WOFF2) is based on those). These font formats map Unicode code points to glyphs, but OpenType and TrueType font files are restricted to 65,535 glyphs. Collection files provide a "gap mode" mechanism for overcoming this limit in a single font file. (Each font within the collection still has the 65,535 limit, however.) A TrueType Collection file would typically have a file extension of ".ttc".

Thousands of fonts exist on the market, but fewer than a dozen fonts—sometimes described as "pan-Unicode" fonts—attempt to support the majority of Unicode's character repertoire. Instead, Unicode-based fonts typically focus on supporting only basic ASCII and particular scripts or sets of characters or symbols. Several reasons justify this approach: applications and documents rarely need to render characters from more than one or two writing systems; fonts tend to demand resources in computing environments; and operating systems and applications show increasing intelligence in regard to obtaining glyph information from separate font files as needed, i.e., font substitution. Furthermore, designing a consistent set of rendering instructions for tens of thousands of glyphs constitutes a monumental task; such a venture passes the point of diminishing returns for most typefaces.

Newlines

Unicode partially addresses the newline problem that occurs when trying to read a text file on different platforms. Unicode defines a large number of characters that conforming applications should recognize as line terminators.

In terms of the newline, Unicode introduced U+2028 LINE SEPARATOR and U+2029 PARAGRAPH SEPARATOR. This was an attempt to provide a Unicode solution to encoding paragraphs and lines semantically, potentially replacing all of the various platform solutions. In doing so, Unicode does provide a way around the historical platform-dependent solutions. Nonetheless, few if any Unicode solutions have adopted these Unicode line and paragraph separators as the sole canonical line ending characters. However, a common approach to solving this issue is through newline normalization. This is achieved with the Cocoa text system in macOS and also with W3C XML and HTML recommendations. In this approach, every possible newline character is converted internally to a common newline (which one does not really matter since it is an internal operation just for rendering). In other words, the text system can correctly treat the character as a newline, regardless of the input's actual encoding.

List of Unicode characters

For a higher-level list of entire blocks rather than individuals, see Unicode block.

As of Unicode version 16.0, there are 155,063 characters with code points, covering 168 modern and historical scripts, as multiple symbol sets. This includes the 1,062 characters in the Multilingual European Character Set 2 (MES-2) subset, and some additional related characters.

Character reference overview

[See also: List of XML and HTML character entity references and Unicode input]

| Index of predominant national and selected regional or minority scripts | ||||||||

�

[TD] Arabic Hebrew |

[TD] North Indic

South Indic

Ethiopic

Thaana

Canadian syllabics[/TD]

[/TD]

[TR]

[TD]a Featural-alphabetic. b Limited.[/TD]

[/TR]

HTML and XML provide ways to reference Unicode characters when the characters themselves either cannot or should not be used. A numeric character reference refers to a character by its Universal Character Set/Unicode code point, and a character entity reference refers to a character by a predefined name.

A numeric character reference uses the format

&#nnnn;

or

&#xhhhh;

where nnnn is the code point in decimal form, and hhhh is the code point in hexadecimal form. The x must be lowercase in XML documents. The nnnn or hhhh may be any number of digits and may include leading zeros. The hhhh may mix uppercase and lowercase, though uppercase is the usual style.

In contrast, a character entity reference refers to a character by the name of an entity which has the desired character as its replacement text. The entity must either be predefined (built into the markup language) or explicitly declared in a Document Type Definition (DTD). The format is the same as for any entity reference:

&name;

where name is the case-sensitive name of the entity. The semicolon is required.

Because numbers are harder for humans to remember than names, character entity references are most often written by humans, while numeric character references are most often produced by computer programs.

Control codes

editMain articles: Unicode control characters and C0 and C1 control codes

See also: ASCII § ASCII control characters, and Control Pictures

65 characters, including DEL. All belong to the common script.

| Code | Decimal | Octal | Description | Abbreviation / Key | |

|---|---|---|---|---|---|

| Code | Decimal | Octal | Description | Abbreviation | |

C0 | U+0000 | 0 | 000 | Null character | NUL |

| U+0001 | 1 | 001 | Start of Heading | SOH / Ctrl-A | |

| U+0002 | 2 | 002 | Start of Text | STX / Ctrl-B | |

| U+0003 | 3 | 003 | End-of-text character | ETX / Ctrl-C1 | |

| U+0004 | 4 | 004 | End-of-transmission character | EOT / Ctrl-D2 | |

| U+0005 | 5 | 005 | Enquiry character | ENQ / Ctrl-E | |

| U+0006 | 6 | 006 | Acknowledge character | ACK / Ctrl-F | |

| U+0007 | 7 | 007 | Bell character | BEL / Ctrl-G3 | |

| U+0008 | 8 | 010 | Backspace | BS / Ctrl-H | |

| U+0009 | 9 | 011 | Horizontal tab | HT / Ctrl-I | |

| U+000A | 10 | 012 | Line feed | LF / Ctrl-J4 | |

| U+000B | 11 | 013 | Vertical tab | VT / Ctrl-K | |

| U+000C | 12 | 014 | Form feed | FF / Ctrl-L | |

| U+000D | 13 | 015 | Carriage return | CR / Ctrl-M5 | |

| U+000E | 14 | 016 | Shift Out | SO / Ctrl-N | |

| U+000F | 15 | 017 | Shift In | SI / Ctrl-O6 | |

| U+0010 | 16 | 020 | Data Link Escape | DLE / Ctrl-P | |

| U+0011 | 17 | 021 | Device Control 1 | DC1 / Ctrl-Q7 | |

| U+0012 | 18 | 022 | Device Control 2 | DC2 / Ctrl-R | |

| U+0013 | 19 | 023 | Device Control 3 | DC3 / Ctrl-S8 | |

| U+0014 | 20 | 024 | Device Control 4 | DC4 / Ctrl-T | |

| U+0015 | 21 | 025 | Negative-acknowledge character | NAK / Ctrl-U9 | |

| U+0016 | 22 | 026 | Synchronous Idle | SYN / Ctrl-V | |

| U+0017 | 23 | 027 | End of Transmission Block | ETB / Ctrl-W | |

| U+0018 | 24 | 030 | Cancel character | CAN / Ctrl-X10 | |

| U+0019 | 25 | 031 | End of Medium | EM / Ctrl-Y | |

| U+001A | 26 | 032 | Substitute character | SUB / Ctrl-Z11 | |

| U+001B | 27 | 033 | Escape character | ESC | |

| U+001C | 28 | 034 | File Separator | FS | |

| U+001D | 29 | 035 | Group Separator | GS | |

| U+001E | 30 | 036 | Record Separator | RS | |

| U+001F | 31 | 037 | Unit Separator | US | |

| U+007F | 127 | 0177 | Delete | DEL |

C1 | U+0080 | 128 | 0302 0200 | Padding Character | PAD |

| U+0081 | 129 | 0302 0201 | High Octet Preset | HOP | |

| U+0082 | 130 | 0302 0202 | Break Permitted Here | BPH | |

| U+0083 | 131 | 0302 0203 | No Break Here | NBH | |

| U+0084 | 132 | 0302 0204 | Index | IND | |

| U+0085 | 133 | 0302 0205 | Next Line | NEL | |

| U+0086 | 134 | 0302 0206 | Start of Selected Area | SSA | |

| U+0087 | 135 | 0302 0207 | End of Selected Area | ESA | |

| U+0088 | 136 | 0302 0210 | Character Tabulation Set | HTS | |

| U+0089 | 137 | 0302 0211 | Character Tabulation with Justification | HTJ | |

| U+008A | 138 | 0302 0212 | Line Tabulation Set | VTS | |

| U+008B | 139 | 0302 0213 | Partial Line Forward | PLD | |

| U+008C | 140 | 0302 0214 | Partial Line Backward | PLU | |

| U+008D | 141 | 0302 0215 | Reverse Line Feed | RI | |

| U+008E | 142 | 0302 0216 | Single-Shift Two | SS2 | |

| U+008F | 143 | 0302 0217 | Single-Shift Three | SS3 | |

| U+0090 | 144 | 0302 0220 | Device Control String | DCS | |

| U+0091 | 145 | 0302 0221 | Private Use 1 | PU1 | |

| U+0092 | 146 | 0302 0222 | Private Use 2 | PU2 | |

| U+0093 | 147 | 0302 0223 | Set Transmit State | STS | |

| U+0094 | 148 | 0302 0224 | Cancel character | CCH | |

| U+0095 | 149 | 0302 0225 | Message Waiting | MW | |

| U+0096 | 150 | 0302 0226 | Start of Protected Area | SPA | |

| U+0097 | 151 | 0302 0227 | End of Protected Area | EPA | |

| U+0098 | 152 | 0302 0230 | Start of String | SOS | |

| U+0099 | 153 | 0302 0231 | Single Graphic Character Introducer | SGCI | |

| U+009A | 154 | 0302 0232 | Single Character Intro Introducer | SCI | |

| U+009B | 155 | 0302 0233 | Control Sequence Introducer | CSI | |

| U+009C | 156 | 0302 0234 | String Terminator | ST | |

| U+009D | 157 | 0302 0235 | Operating System Command | OSC | |

| U+009E | 158 | 0302 0236 | Private Message | PM | |

| U+009F | 159 | 0302 0237 | Application Program Command | APC |

Footnotes:

1 Control-C has typically been used as a "break" or "interrupt" key.2 Control-D has been used to signal "end of file" for text typed in at the terminal on Unix / Linux systems. Windows, DOS, and older minicomputers used Control-Z for this purpose.3 Control-G is an artifact of the days when teletypes were in use. Important messages could be signalled by striking the bell on the teletype. This was carried over on PCs by generating a buzz sound.4 Line feed is used for "end of line" in text files on Unix / Linux systems.5 Carriage Return (accompanied by line feed) is used as "end of line" character by Windows, DOS, and most minicomputers other than Unix- / Linux-based systems6 Control-O has been the "discard output" key. Output is not sent to the terminal, but discarded, until another Control-o is typed.7 Control-Q has been used to tell a host computer to resume sending output after it was stopped by Control-S.8 Control-S has been used to tell a host computer to postpone sending output to the terminal. Output is suspended until restarted by the Control-Q key.9 Control-U was originally used by Digital Equipment Corporation computers to cancel the current line of typed-in text. Other manufacturers used Control-X for this purpose.10 Control-X was commonly used to cancel a line of input typed in at the terminal.11 Control-Z has commonly been used on minicomputers, Windows and DOS systems to indicate "end of file" either on a terminal or in a text file. Unix / Linux systems use Control-D to indicate end-of-file at a terminal.

If you want to check out the rest:List of unicode characters

There are still sevral more. As in numbers/symbols/font/text(in different languages/script/codes/letters.

Check out this as well:

List of them

Astronomy:

Astronomy is a natural science that studies celestial objects and the phenomena that occur in the cosmos. It uses mathematics, physics, and chemistry in order to explain their origin and their overall evolution. Objects of interest include planets, moons, stars, nebulae, galaxies, meteoroids, asteroids, and comets. Relevant phenomena include supernova explosions, gamma ray bursts, quasars, blazars, pulsars, and cosmic microwave background radiation. More generally, astronomy studies everything that originates beyond Earth's atmosphere. Cosmology is a branch of astronomy that studies the universe as a whole. The Paranal Observatory of European Southern Observatory shooting a laser guide star to the Galactic Center

The Paranal Observatory of European Southern Observatory shooting a laser guide star to the Galactic CenterAstronomy is one of the oldest natural sciences. The early civilizations in recorded history made methodical observations of the night sky. These include the Egyptians, Babylonians, Greeks, Indians, Chinese, Maya, and many ancient indigenous peoples of the Americas. In the past, astronomy included disciplines as diverse as astrometry, celestial navigation, observational astronomy, and the making of calendars.

Professional astronomy is split into observational and theoretical branches. Observational astronomy is focused on acquiring data from observations of astronomical objects. This data is then analyzed using basic principles of physics. Theoretical astronomy is oriented toward the development of computer or analytical models to describe astronomical objects and phenomena. These two fields complement each other. Theoretical astronomy seeks to explain observational results and observations are used to confirm theoretical results.

Astronomy is one of the few sciences in which amateurs play an active role. This is especially true for the discovery and observation of transient events. Amateur astronomers have helped with many important discoveries, such as finding new comets.

Observational astronomy

[Main article: Observational astronomy] Overview of types of observational astronomy by observed wavelengths and their observability

Overview of types of observational astronomy by observed wavelengths and their observabilityThe main source of information about celestial bodies and other objects is visible light, or more generally electromagnetic radiation.[51] Observational astronomy may be categorized according to the corresponding region of the electromagnetic spectrum on which the observations are made. Some parts of the spectrum can be observed from the Earth's surface, while other parts are only observable from either high altitudes or outside the Earth's atmosphere. Specific information on these subfields is given below.

Radio astronomy

The Very Large Array in New Mexico, an example of a radio telescope

[Main article: Radio astronomy]

Radio astronomy uses radiation with wavelengths greater than approximately one millimeter, outside the visible range.[52] Radio astronomy is different from most other forms of observational astronomy in that the observed radio waves can be treated as waves rather than as discrete photons. Hence, it is relatively easier to measure both the amplitude and phase of radio waves, whereas this is not as easily done at shorter wavelengths.[52]

Although some radio waves are emitted directly by astronomical objects, a product of thermal emission, most of the radio emission that is observed is the result of synchrotron radiation, which is produced when electrons orbit magnetic fields.[52] Additionally, a number of spectral lines produced by interstellar gas, notably the hydrogen spectral line at 21 cm, are observable at radio wavelengths.[8][52]

A wide variety of other objects are observable at radio wavelengths, including supernovae, interstellar gas, pulsars, and active galactic nuclei.[8][52]

Infrared astronomy

ALMA Observatory is one of the highest observatory sites on Earth. Atacama, Chile.[53]

[Main article: Infrared astronomy]

Infrared astronomy is founded on the detection and analysis of infrared radiation, wavelengths longer than red light and outside the range of our vision. The infrared spectrum is useful for studying objects that are too cold to radiate visible light, such as planets, circumstellar disks or nebulae whose light is blocked by dust. The longer wavelengths of infrared can penetrate clouds of dust that block visible light, allowing the observation of young stars embedded in molecular clouds and the cores of galaxies. Observations from the Wide-field Infrared Survey Explorer (WISE) have been particularly effective at unveiling numerous galactic protostars and their host star clusters.[54][55] With the exception of infrared wavelengths close to visible light, such radiation is heavily absorbed by the atmosphere, or masked, as the atmosphere itself produces significant infrared emission. Consequently, infrared observatories have to be located in high, dry places on Earth or in space.[56] Some molecules radiate strongly in the infrared. This allows the study of the chemistry of space; more specifically it can detect water in comets.[57]

Optical astronomy

The Subaru Telescope (left) and Keck Observatory (center) on Mauna Kea, both examples of an observatory that operates at near-infrared and visible wavelengths. The NASA Infrared Telescope Facility(right) is an example of a telescope that operates only at near-infrared wavelengths.

Main article: Optical astronomy

Historically, optical astronomy, which has been also called visible light astronomy, is the oldest form of astronomy.[58] Images of observations were originally drawn by hand. In the late 19th century and most of the 20th century, images were made using photographic equipment. Modern images are made using digital detectors, particularly using charge-coupled devices (CCDs) and recorded on modern medium. Although visible light itself extends from approximately 4000 Å to 7000 Å (400 nm to 700 nm),[58] that same equipment can be used to observe some near-ultraviolet and near-infrared radiation.

Ultraviolet astronomy

[Main article: Ultraviolet astronomy]Ultraviolet astronomy employs ultraviolet wavelengths between approximately 100 and 3200 Å (10 to 320 nm).[52] Light at those wavelengths is absorbed by the Earth's atmosphere, requiring observations at these wavelengths to be performed from the upper atmosphere or from space. Ultraviolet astronomy is best suited to the study of thermal radiation and spectral emission lines from hot blue stars (OB stars) that are very bright in this wave band. This includes the blue stars in other galaxies, which have been the targets of several ultraviolet surveys. Other objects commonly observed in ultraviolet light include planetary nebulae, supernova remnants, and active galactic nuclei.[52] However, as ultraviolet light is easily absorbed by interstellar dust, an adjustment of ultraviolet measurements is necessary.[52]

X-ray astronomy

[Main article: X-ray astronomy]

X-ray jet made from a supermassive black hole found by NASA's Chandra X-ray Observatory, made visible by light from the early Universe

X-ray astronomy uses X-ray wavelengths. Typically, X-ray radiation is produced by synchrotron emission (the result of electrons orbiting magnetic field lines), thermal emission from thin gases above 107 (10 million) kelvins, and thermal emission from thick gases above 107 Kelvin.[52] Since X-rays are absorbed by the Earth's atmosphere, all X-ray observations must be performed from high-altitude balloons, rockets, or X-ray astronomy satellites. Notable X-ray sources include X-ray binaries, pulsars, supernova remnants, elliptical galaxies, clusters of galaxies, and active galactic nuclei.[52]

Gamma-ray astronomy

Main article: Gamma ray astronomyGamma ray astronomy observes astronomical objects at the shortest wavelengths of the electromagnetic spectrum. Gamma rays may be observed directly by satellites such as the Compton Gamma Ray Observatory or by specialized telescopes called atmospheric Cherenkov telescopes.[52] The Cherenkov telescopes do not detect the gamma rays directly but instead detect the flashes of visible light produced when gamma rays are absorbed by the Earth's atmosphere.[59]

Most gamma-ray emitting sources are actually gamma-ray bursts, objects which only produce gamma radiation for a few milliseconds to thousands of seconds before fading away. Only 10% of gamma-ray sources are non-transient sources. These steady gamma-ray emitters include pulsars, neutron stars, and black hole candidates such as active galactic nuclei.[52]

Fields not based on the electromagnetic spectrum

In addition to electromagnetic radiation, a few other events originating from great distances may be observed from the Earth.In neutrino astronomy, astronomers use heavily shielded underground facilities such as SAGE, GALLEX, and Kamioka II/III for the detection of neutrinos. The vast majority of the neutrinos streaming through the Earth originate from the Sun, but 24 neutrinos were also detected from supernova 1987A.[52] Cosmic rays, which consist of very high energy particles (atomic nuclei) that can decay or be absorbed when they enter the Earth's atmosphere, result in a cascade of secondary particles which can be detected by current observatories.[60] Some future neutrino detectors may also be sensitive to the particles produced when cosmic rays hit the Earth's atmosphere.[52]

Gravitational-wave astronomy is an emerging field of astronomy that employs gravitational-wave detectors to collect observational data about distant massive objects. A few observatories have been constructed, such as the Laser Interferometer Gravitational Observatory LIGO. LIGO made its first detection on 14 September 2015, observing gravitational waves from a binary black hole.[61] A second gravitational wave was detected on 26 December 2015 and additional observations should continue but gravitational waves require extremely sensitive instruments.[62][63]

The combination of observations made using electromagnetic radiation, neutrinos or gravitational waves and other complementary information, is known as multi-messenger astronomy.[64][65]

Astrometry and celestial mechanics

[Main articles: Astrometry and Celestial mechanics] Star cluster Pismis 24 with a nebula